Since the invention of AI, too many people have granted it authority that it should not possess. We have not witnessed a rise in “computer trust” since the early 2010s when most individuals became reliant on “computer-based knowledge and authority.” But what exactly is AI? Is it truly a sentinel, learning by itself and relaying information to us? Is it possible that it will surpass us, come to life, or even take control over us? These are the questions we will attempt to answer in this article, so stay tuned!

AI, as we know it today, has a much longer history than most would think. The foundational work began in 1956 at Dartmouth Workshop, initially establishing a framework. Later, research in the field expanded its knowledge base (programmed information and abilities), and it became available to the public as we know it around 2020. However, corporate institutions, businesses, and other high-profile individuals had already been using this type of technology; we simply didn’t know that it was the exact same model, albeit a little more polished today. For example, early attempts of these models include multiple software applications used by banks, law offices, government agencies, and police institutions, as well as in the creative field, such as writing corrections for mass publications or media companies. We can even classify the Siri application, published in 2011, in this category, as it was primarily designed to search for information on the Internet. Today, the landscape looks a little different; most of these AI models are no longer able to endlessly scroll the web. Instead, they operate on selected data that has been carefully curated and trained by humans.

Training

Because not everyone fully understands how LMs and LLMs are trained, it is important to establish a solid understanding of this subject before we continue our article. Some believe that these models have unlimited access to the World Wide Web to locate information and either answer our questions or complete requested tasks; however, that is not the case at all. AI models are trained by selecting information pre-training. Humans choose the information, which is then filtered and scraped, as not all public media or mediums can be simply uploaded or “scanned in.” Once this step is completed, the information is uploaded to the AI’s code as a sort of “knowledge base,” organized within named folders-of course this is a simplified description –. This structure allows the model to quickly search through these folders when asked and complete the requested task. The knowledge base includes scraped websites, books, and other types of publications, ready-scraped by humans for the AI to pick and choose the most suitable information based on the prompt and pre-coded parameters through cross-matching. But this also means that any information that is not yet uploaded into the core will cause confusion within the AI and either provide incorrect answer or do a complete different action than it was requested to, simply because the required information is not yet worked through and uploaded. Lot of information also never gets selected because of any types of censorship or copyright protection.

Yes, that is right; today’s AI models are nothing more than man-made algorithms with selected knowledge. They do not have unlimited access to publications, nor are they able to scrape data by themselves; rather, they require human-written sources to be scraped either by another model—which is also trained and programmed by humans—or directly by humans. These models are greatly limited by legal and ethical frameworks, political, corporate, and anti-religious censorship, and also by their own programming, which requires them to avoid relying on any information that would, at any point, suggest any negative information towards any individual. Hence, they are not able to confirm even simple facts, such as whether Columbus was great or evil. In fact, in the world of science, these models are not called AI models as this is a shortening of “Artificial Intelligence”; rather, they are referred to as or “LMs,” “LLMs” which stands for Language Models, or Large Language Models, because they are nothing more than pre-programmed applications that rely on information that is written within their backend. This makes it incredibly easy to manipulate or completely misinform the public even by “accident” as most people today, instead of doing their own research, would ask one of the LLMs, such as ChatGPT or Google Gemini, to find answers. In most cases, they end up with sloppy, half-correct, or even completely incorrect information, and those who are not careful enough could fall into this pit. By granting authority to it, they may blindly believe whatever it says.

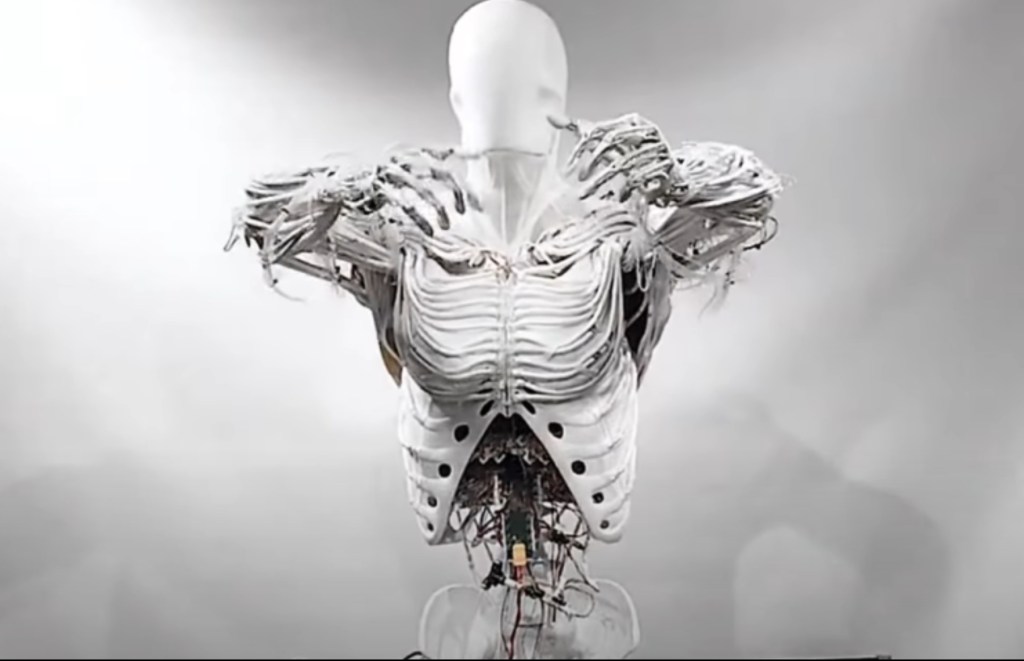

But if this is the case, why are there so many companies advertising and claiming sentinel-like behaviors regarding their own model? One might ask, and the answer is more self-evident than one might think. It’s just simple marketing. Every developer agency creates these models in hopes of profit, and today there is a model for every sort of task. In fact, there have been multiple cases surfaced in the past three years displaying how much human input is really needed for these models, such as the Indian AI named Natasha, developed by Builder.AI, which is marketed as a fully AI-powered app but turned out to be actual humans answering queries, transforming it more into a “customer support portal” than artificial intelligence. Similarly, the Boris humanoid also known as Alyosha the Robot, which was presented as a cutting-edge humanoid, capable of walking, talking, dancing, and even discussing topics like math and microgravity, with claims such as the “most advanced robot”. However, journalists and online investigators quickly uncovered that Boris was not an AI powered robot, rather a person inside a robot costume.

These are just a few of the many examples where the marketing of these ambitious AI models has been exaggerated in order to gain more sales, which partly explains why the public has such a significant misconception about the abilities and inferiority of any man-made computer algorithm. So will they ever come and take over? Impossible. These models have not any sort of “will for necessities”. While it is able to tell you that energy is required for its “life” that is only due to the information that is within it, however it has not abilities to comprehend it. It is not much but lines of information , just like and excel and operates fully on what information we give it meaning that the filtering process, its behavior, everything is pre-loaded, if you are surprised about an answer, it was made to surprise you and so on.

However, it is crucial to stay informed about today’s most widely used technology, AI. To keep up with the latest developments, subscribe to our newsletter for insightful updates and expert perspectives.

Subscribe to continue reading

Subscribe to get access to the rest of this post and other subscriber-only content.